In one of the previous posts, I coded up a small search engine with tries and the leaf nodes of the tries held the list of documents which had the specific word (path in the trie). The list of documents was maintained as binary search trees because, trees can help in set representation and finding set intersections pretty neat. But, not anymore in the case of search engines when we increase the size of the sets involved. Using binary search trees for the list of documents will utilize more memory for intersection and take more time than a sorted linked list. This is evident from the following profiling snapshots below with large amounts of data. More over search engines use sorted list to find the intersection faster by skipping through non-matching documents. This may seem a bit vague argument but, coding and profiling proves that this is better way.

New Algorithm for Set Intersection of the search engine:

So instead of binary search trees we use a sorted linked list to store the documents for a particular word. This list is sorted on the basis of the id of the documents. So every document

has to have an id. To find the intersection between two lists of documents we follow the algorithm as shown below.

1. start from the beginning of the two sorted lists A & B

2. if the documents in the current positions of A and B match then we have a document which has both words add the document to the intersection list.

3. otherwise find which list A or B has the smaller document id at the current positions of mismatch. if it is A then, skip through elements of A until you find a document with id >= current id at B. if it is B then, skip through elements of B until you find a document with id >= current id at A.

4. If you run off the end of any of the lists return the intersection list.

Example

List A 1, 4, 7, 9, 11, 31, 37, 56, 143, 200, 900, 3422

List B 1, 29, 37, 56, 142

Here in this example, we have a match at 1 and a mismatch at the second index. List A has smaller id of 4 compared to 29 of B. So we skip through A until we reach 31. Now, then

case is just the reverse B has 29 and A has 31 so we skip in B to 37. Then we skip in A to 37 a match! ok so the idea is to skip non-matching elements in the lists. We are also saved from having to go through the rest of A.

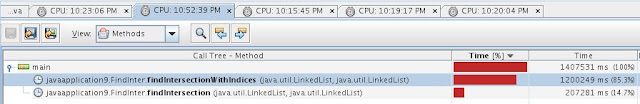

Run time Performance Profiling of implementing the Set intersection using, (a) Sorted Linked Lists (skipping using linked list iterators), (b) Sorted Linked List indices (age old ++ index for skipping), (c) Splay tree. The difference in the code for cases a and b are trivial in appearance but, is greatly different in efficiency.

Partial code snapshot of iterator based skipping.

Partial code Snapshot of Index ++ skipping

In both the above codes, the bulk of the time is used in "get(n)" type methods for the Java Linked List collection. But, it is far less for the iterator next() method!! This is evident here

Performance Profiling snap shots for finding intersection of two lists with increasing sizes around the ranges 100, 1000, 10000, 100000, 1000000 in order.

The observation is that, when there are only a few items, skipping / jumping using indices is very fast. But, as we increase both the lists' size we will have to leave the splay tree / binary tree representation at around 10000. This is because getting each item of a tree and then comparing it will cost memory which is saved in both the list based scenarios. More over when two trees have M and N items, we try to find if each of M is similar to any of N. This takes M log N, as M and N are very large this can be bothersome.

So, we are down with 2 contenders. The performance profiling of indices based skipping gives a shock as we again increase the lists' sizes, the Sorted Linked Lists (skipping using iterators) is way faster than indices (age old ++ index). For example, with a list sizes of 500000 and 150000, the sorted linked list algorithm finds the intersection in 3.45 minutes. Whereas, if we skip using integer indices and getAt(n) methods we get the set intersection in around 20 minutes. Again as mention before iterator Next() seems faster for the Iterator / Java LinkedList as opposed to a getIndex(n) for Lists.

For multiples sorted lists, we can find the intersection by taking the two smallest lists and finding the set intersection as above. Then doing the same with the next smallest list as so on until we end with only one list. So by using sorted lists for the documents we have, for a given word, we can speed up the set intersection part of our search engine by many order of magnitude. The thing is that, we need to maintain the documents for a particular word in the sorted order. Some housekeeping does help it seems!.

New Algorithm for Set Intersection of the search engine:

So instead of binary search trees we use a sorted linked list to store the documents for a particular word. This list is sorted on the basis of the id of the documents. So every document

has to have an id. To find the intersection between two lists of documents we follow the algorithm as shown below.

1. start from the beginning of the two sorted lists A & B

2. if the documents in the current positions of A and B match then we have a document which has both words add the document to the intersection list.

3. otherwise find which list A or B has the smaller document id at the current positions of mismatch. if it is A then, skip through elements of A until you find a document with id >= current id at B. if it is B then, skip through elements of B until you find a document with id >= current id at A.

4. If you run off the end of any of the lists return the intersection list.

Example

List A 1, 4, 7, 9, 11, 31, 37, 56, 143, 200, 900, 3422

List B 1, 29, 37, 56, 142

Here in this example, we have a match at 1 and a mismatch at the second index. List A has smaller id of 4 compared to 29 of B. So we skip through A until we reach 31. Now, then

case is just the reverse B has 29 and A has 31 so we skip in B to 37. Then we skip in A to 37 a match! ok so the idea is to skip non-matching elements in the lists. We are also saved from having to go through the rest of A.

Run time Performance Profiling of implementing the Set intersection using, (a) Sorted Linked Lists (skipping using linked list iterators), (b) Sorted Linked List indices (age old ++ index for skipping), (c) Splay tree. The difference in the code for cases a and b are trivial in appearance but, is greatly different in efficiency.

Partial code snapshot of iterator based skipping.

Partial code Snapshot of Index ++ skipping

In both the above codes, the bulk of the time is used in "get(n)" type methods for the Java Linked List collection. But, it is far less for the iterator next() method!! This is evident here

Performance Profiling snap shots for finding intersection of two lists with increasing sizes around the ranges 100, 1000, 10000, 100000, 1000000 in order.

The observation is that, when there are only a few items, skipping / jumping using indices is very fast. But, as we increase both the lists' size we will have to leave the splay tree / binary tree representation at around 10000. This is because getting each item of a tree and then comparing it will cost memory which is saved in both the list based scenarios. More over when two trees have M and N items, we try to find if each of M is similar to any of N. This takes M log N, as M and N are very large this can be bothersome.

So, we are down with 2 contenders. The performance profiling of indices based skipping gives a shock as we again increase the lists' sizes, the Sorted Linked Lists (skipping using iterators) is way faster than indices (age old ++ index). For example, with a list sizes of 500000 and 150000, the sorted linked list algorithm finds the intersection in 3.45 minutes. Whereas, if we skip using integer indices and getAt(n) methods we get the set intersection in around 20 minutes. Again as mention before iterator Next() seems faster for the Iterator / Java LinkedList as opposed to a getIndex(n) for Lists.

For multiples sorted lists, we can find the intersection by taking the two smallest lists and finding the set intersection as above. Then doing the same with the next smallest list as so on until we end with only one list. So by using sorted lists for the documents we have, for a given word, we can speed up the set intersection part of our search engine by many order of magnitude. The thing is that, we need to maintain the documents for a particular word in the sorted order. Some housekeeping does help it seems!.

No comments:

Post a Comment